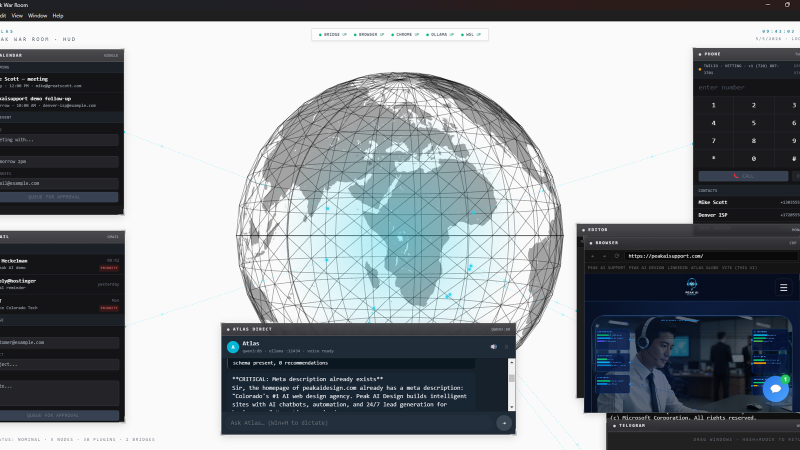

Production AI ops stack — local hardware, your data, your tools

Atlas runs daily operations on your own machine using a local LLM as the brain and a cloud bridge for heavy reasoning only when needed. Plugin-extensible runtime, three-bucket permission model, voice-driven Iron Man-style HUD interface.

Architecture highlights

- Local-LLM-first — Ollama (qwen3:8b) handles routing and classification on consumer GPU. Cloud (Claude API) only for heavy reasoning. Roughly 95% local, 5% cloud.

- Three-bucket permission model — every action classified before execution: autonomous (reads, drafts, own-property writes), requires-approval (outbound sends, code commits, spend), forbidden (credentials, banking, force-push). Default-deny on unknowns.

- Self-extending — plugin-builder and agent-builder meta-tools let the agent grow new capabilities or spawn sub-agents on demand by dispatching to a coding agent that has filesystem access.

- Hallucination-proof — Context7 MCP integration supplies current vendor docs to grounded responses, eliminating stale-API drift.

- Voice-driven — system-native dictation in, free Microsoft neural TTS out. Optional Whisper / OpenAI upgrades.

Outcomes

Drives daily operations across two production businesses. 50+ capability plugins. Open-source architecture overview at github.com/drelf/peak-ai-ops-architecture. Ships with companion MCP bridges so any compliant AI agent (Claude Desktop, Cursor, Continue, OpenClaw) can integrate.